Generative AI Challenges Slides | Ethical, Trust, Copyright

CHALLENGS OVERVIEW

|

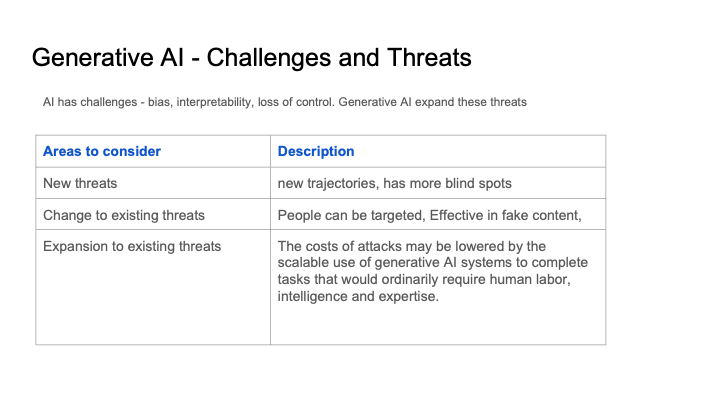

THREATS

|

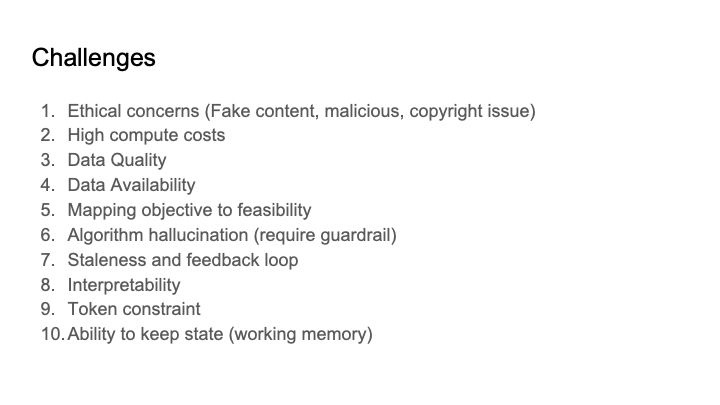

TYPE OF CHALLENGES

|

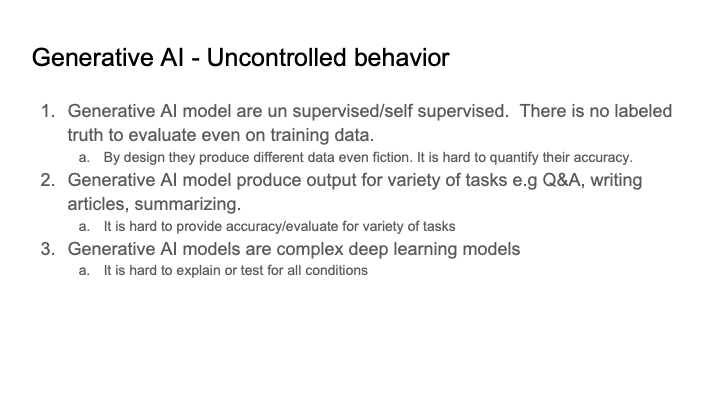

UNCONTROLLED BEHAVIOR

|

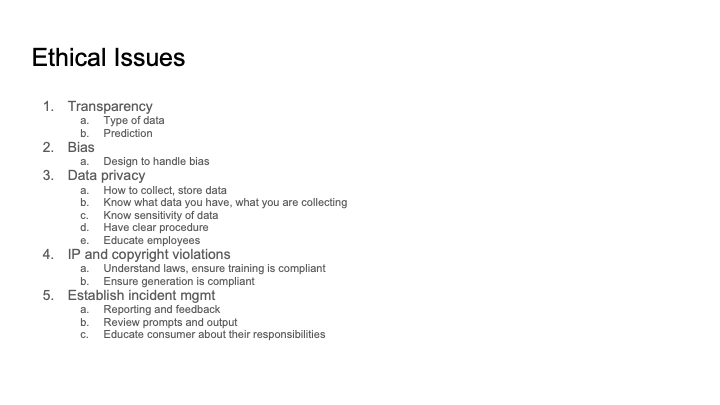

ETHICAL ISSUES

|

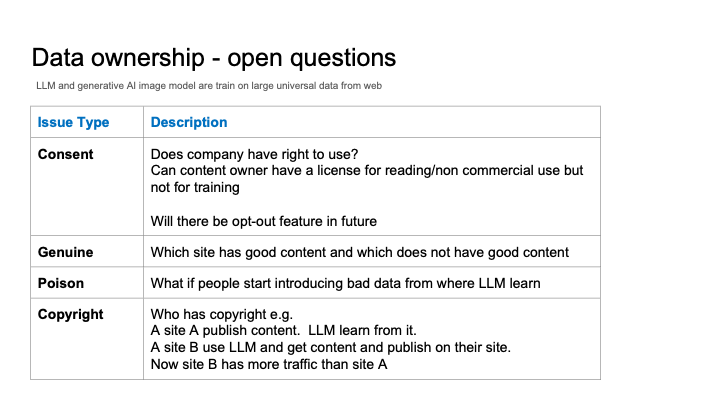

DATA OWNERSHIP

|

Summary of Generative AI threats, challenges, risks |

||||||||||||

Dimensions of threats |

||||||||||||

|

|

||||||||||||

|

|

||||||||||||

|

|

||||||||||||

Ethical challenges |

||||||||||||

|

|

||||||||||||

|

|

||||||||||||

|

|

||||||||||||

|

|

||||||||||||

Environment challenges |

||||||||||||

|

|

||||||||||||

|

|

||||||||||||

Gen AI Risks and Threat |

||||||||||||

Generative AI: Challenges and Ethical ConsiderationsGenerative Artificial Intelligence (AI) has revolutionized various industries by enabling machines to create content, such as images, text, and music, that mimics human creativity. However, along with its advancements, generative AI also poses several challenges and ethical issues that need to be addressed.

Addressing these challenges and ethical considerations is crucial to harness the potential benefits of generative AI while mitigating its risks. Stakeholders must collaborate to establish guidelines and regulations that promote responsible use of this technology. |

||||||||||||

Explainability challenges |

||||||||||||

|

There are a number of challenges in the interpretation of generative AI. These include: Lack of transparency: Generative AI models are often complex and opaque, making it difficult to understand how they work. This can make it difficult to interpret the output of these models and to identify potential biases or errors. Data bias: Generative AI models are trained on large datasets of data. If these datasets are biased, then the models will also be biased. This can lead to the models generating output that is biased or discriminatory. Unintended consequences: Generative AI models can be used to generate a wide variety of output, including text, code, images, and music. It is important to be aware of the potential unintended consequences of using these models. For example, a generative AI model could be used to generate fake news articles or to create deepfakes. Despite these challenges, generative AI is a powerful tool that has the potential to be used for a variety of purposes. It is important to be aware of the challenges in the interpretation of generative AI and to take steps to mitigate these challenges. Here are some additional tips for interpreting generative AI: Understand the model: It is important to understand how the generative AI model works. This will help you to interpret the output of the model and to identify potential biases or errors. Be aware of data bias: Generative AI models are trained on large datasets of data. If these datasets are biased, then the models will also be biased. It is important to be aware of the potential for data bias and to take steps to mitigate it. Consider the potential unintended consequences: Generative AI models can be used to generate a wide variety of output, including text, code, images, and music. It is important to be aware of the potential unintended consequences of using these models. |

||||||||||||

Feedback loop challenges |

||||||||||||

|

Generative AI models are trained on large datasets of data. If this data is not updated regularly, the model can become stale and produce outdated or inaccurate output. This is known as the staleness challenge. In addition, generative AI models can be susceptible to feedback loops. This occurs when the model is trained on data that is itself generated by the model. This can lead to the model producing output that is increasingly biased or inaccurate. This is known as the feedback loop challenge. To address the staleness challenge, it is important to regularly update the data that is used to train the generative AI model. This can be done by collecting new data or by updating existing data with new information. To address the feedback loop challenge, it is important to use a variety of data sources to train the generative AI model. This will help to prevent the model from becoming biased or inaccurate. It is also important to monitor the output of the generative AI model for signs of bias or inaccuracy. If any problems are identified, the model can be updated or retrained to address the problems. By following these steps, it is possible to mitigate the challenges related to staleness and feedback loops in generative AI. Here are some additional tips for mitigating the challenges of staleness and feedback loops in generative AI: Use a variety of data sources: When training a generative AI model, it is important to use a variety of data sources. This will help to prevent the model from becoming biased or inaccurate. Monitor the output of the model: It is important to monitor the output of the generative AI model for signs of bias or inaccuracy. If any problems are identified, the model can be updated or retrained to address the problems. Update the model regularly: It is important to regularly update the generative AI model with new data. This will help to ensure that the model is up-to-date and accurate. |

||||||||||||

Challengs-overview Data-ownership Ethical-issues Genai-challenges-concerns Threats Type-of-challenges Uncontrolled-behavior